When AI Goes On The Offense

AI red teaming and offensive security is having its moment, and just getting started

This week, over 43,000 security professionals descended on San Francisco for the RSA Conference, the cybersecurity industry’s annual main event. What began as a highly specialized, single-panel event on digital signature standards has evolved into one of the largest technology conferences globally.

Amidst all the buzz around AI-enabled security, zero trust principles, and control planes for autonomous agents, one subsector of security has been having its moment in the sun: offensive security. In just the past two weeks, over $350M has been poured into startups building AI systems designed to do one thing: hack you before someone else does.

XBOW, founded by one of the creators of GitHub Copilot, raised $120M at a $1B+ valuation. Armadin, led by Kevin Mandia (who built Mandiant and sold it to Google for $5.4B), launched with a record $190M seed and Series A. RunSybil, co-founded by OpenAI’s first security researcher, raised $40M led by Khosla Ventures with Anthropic’s Anthology Fund participating. And there are several other companies led by highly sophisticated security and AI teams that are quietly amassing significant revenue.

That’s a lot of capital moving into a category that barely existed two years ago. So what’s going on?

The Attack Surface Just Changed

For decades, security testing worked on a simple model: hire a team of experts, point them at your systems for a few weeks, get a report. Repeat annually, or maybe quarterly if you were diligent. The assumption was that the attack surface changed slowly enough for periodic human-led assessments to keep pace.

That assumption is breaking down. Two things are happening simultaneously.

First, the attack surface is expanding faster than ever. Engineering teams ship code continuously. AI coding assistants are accelerating the volume of code being written and deployed. Vibe coding is producing applications at a pace that would have been unimaginable even a year ago. Every new deployment, every new API endpoint, every new integration is a potential entry point — and the gap between what’s been built and what’s been tested is widening.

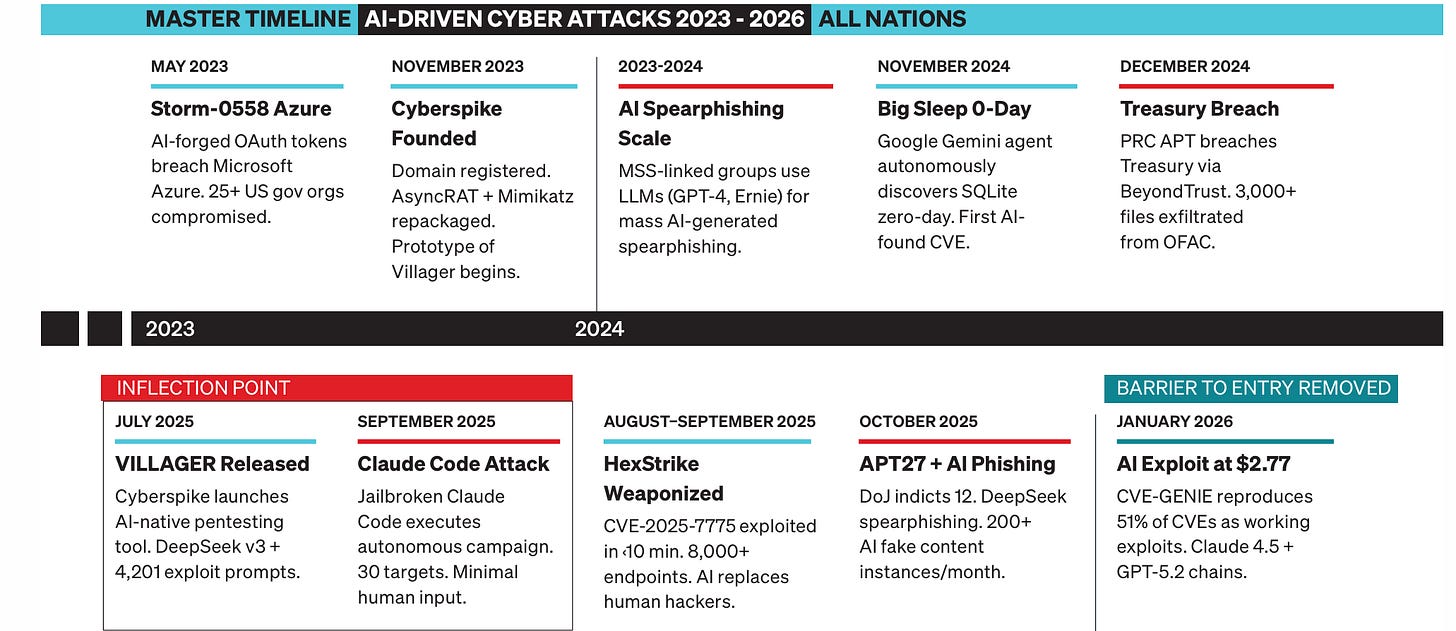

Second, and more importantly, attackers are getting faster. AI doesn’t just help defenders. It helps the offense too. The same reasoning capabilities that power coding assistants can be turned toward discovering vulnerabilities, crafting exploits, and chaining together attack paths. What used to take a skilled human red teamer days can now happen in minutes. A report from Booz Allen concluded that threat actors have adopted AI more quickly than governments and private companies have adopted it for cyber defense.

For example, when the Cybersecurity and Infrastructure Security Agency adds a CVE to its Known Exploited Vulnerabilities list, defenders are given 15-day timelines to implement a patch. That would be insufficient for something like HexStrike, an open source AI security framework popular with cybercriminals that exploited “thousands” of Citrix Netscaler products in less than 10 minutes using a single critical CVE.

This creates an asymmetry that traditional security testing simply cannot address. You can’t defend at human speed against attacks that move at machine speed.

Enter the Autonomous Red Team

The companies raising hundreds of millions right now are all converging on a similar thesis: offensive security needs to become continuous, autonomous, and AI-native.

XBOW applies AI reasoning and adversarial workflows modeled on real-world attack techniques, continuously testing applications and validating vulnerabilities with actual exploitation - not theoretical risk scores. They reached the top of HackerOne’s leaderboard and are deployed at Fortune 500 companies. RunSybil takes a black-box approach, where AI agents probe live systems from the outside without source code access, mimicking how a real attacker would operate. Armadin is building what it calls an “agentic attacker swarm” - specialized AI agents that reason, plan, and adapt like sophisticated threat actors, running 24/7 across the entire attack surface.

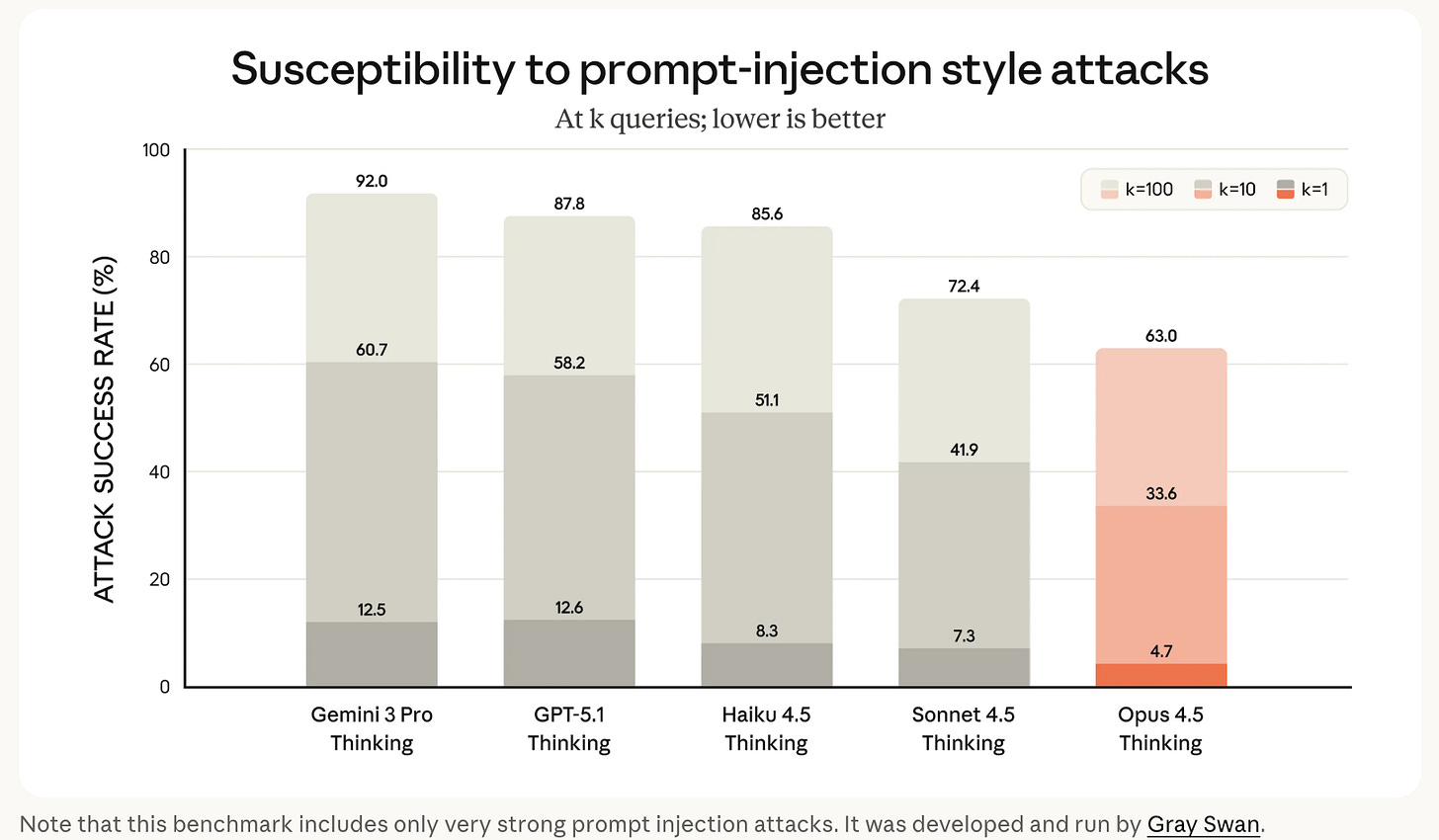

Another compelling company in this space is Gray Swan, founded by two professors out of Carnegie Mellon University. Gray Swan operates security tournaments connecting thousands of security researchers in competitive environments to discover novel attack vectors against top frontier models. They utilize these proprietary insights and data to pressure test AI models deployed inside enterprises and agents in real-time. This unique, highly complementary set of products has propelled Gray Swan to be trusted by Anthropic, OpenAI, Meta, Google DeepMind, Bytedance, etc.

The common thread is a shift from point-in-time to continuous, and from human-led to AI-augmented. These aren’t fancy scanners. They’re autonomous systems that chain together vulnerabilities, reason about context, and validate real exploit paths — the kind of deep, creative work that previously required elite (and very expensive) human talent.

Why This Matters Beyond Security

We spend a lot of time thinking about where AI creates durable value versus where it’s simply a wrapper on a foundation model. AI red teaming is an area we believe will birth a massive, generational company. Here’s why:

The problem compounds with AI adoption itself. As more enterprises deploy AI agents — systems that autonomously take actions, access data, and interact with other systems — the attack surface doesn’t just grow, it changes in kind. An AI agent that can query databases, call APIs, and execute code introduces entirely new classes of vulnerabilities: prompt injection, tool misuse, privilege escalation through reasoning chains. OWASP just published a Top 10 specifically for agentic applications. The more AI gets deployed, the more AI-native security testing is needed. The tailwind is structural.

Domain expertise compounds. These aren’t products you can build by wrapping GPT-4 in a nice UI. RunSybil’s founders built security at OpenAI and ran red teams at Meta. Armadin’s team includes decades of incident response experience across nation-state level engagements. Gray Swan spent years perfecting its “Arena” model and nurturing thousands of the world’s top red-teamers. The models need to be trained on real adversarial behavior, real exploit chains, real-world edge cases. That expertise is scarce and compounds over time as the systems encounter more environments and refine their attack strategies.

The buyer is already spending. CISOs have budget for penetration testing. This isn’t a new line item — it’s a better, faster version of something enterprises already pay for. The average cost of a data breach is around $4.5M. Annual pentesting engagements typically run $20K-$200K+ and cover a fraction of the attack surface. The ROI math on continuous, autonomous testing is compelling, especially as regulatory frameworks increasingly demand ongoing security validation.

What We’re Watching

The AI red teaming space is moving fast, and there are a few dynamics we’re paying close attention to.

The first is the offense-defense feedback loop. As AI-powered offensive tools get better at finding vulnerabilities, defensive systems will need to evolve in response — which will in turn push offensive capabilities further. This creates a durable innovation cycle, not a one-time product opportunity.

The second is the regulatory tailwind. The EU AI Act, NIST’s AI Risk Management Framework, and evolving compliance requirements are pushing enterprises toward continuous security validation, not annual checkbox exercises. Companies that can provide auditable, repeatable, and comprehensive testing will have an advantage as regulatory expectations harden.

The third is the talent gap this solves. There simply aren’t enough elite human red teamers to meet demand. The cybersecurity talent shortage has been well-documented for years. AI-native offensive security doesn’t just make testing faster — it makes deep security expertise accessible to organizations that could never afford a world-class red team.

The Race Is On

We’re still in the early innings of understanding how AI reshapes cybersecurity. But the direction is clear: as AI agents become embedded in enterprise workflows, the systems that test and secure those agents need to be equally intelligent, equally autonomous, and equally persistent.

The $350M that just moved into this space in a matter of weeks isn’t hype chasing. It’s capital recognizing that the nature of the threat has fundamentally changed — and that the defenders need to catch up.

The best offense has always been a good offense. In the age of AI, that’s no longer a metaphor.