The Vibe Coding Hangover

In a world where anyone can ship anything, product taste and design craft becomes the moat that matter

It’s no surprise that AI has made shipping easier than ever. Ask any founder or PM what’s changed most about their job in the last eighteen months and you’ll hear some version of the same answer: we ship so much faster now. The best engineers (who are not stuck in their old ways) would also say the same thing. Engineers are simply 10x’d and non-technical PMs are writing their own features. The barrier to getting something in front of users has collapsed.

The natural assumption that follows this is if everyone can ship faster, whoever ships fastest wins. Pour more code into production, iterate quicker, outpace the competition on features. Speed is the strategy. While this has been the assumption in the past, we don’t believe that alone will be a winning strategy of the future. When shipping velocity equalizes across an industry the bottleneck doesn’t disappear, but instead it relocates.

We expect this to shift will point to having deep product understanding. Most teams are not set up to answer this problem well and the companies that figure this out will have a durable advantage. The ones that don’t will ship a lot of mediocre product very quickly.

Speed Is Now Table Stakes

Cursor crossed one million paid users. Claude Code is being used by engineering teams at companies of all sizes. Non-engineers are committing production code. The result is that the supply of shipped features has exploded, but user attention and user love have not expanded proportionally. There is more product in the world, and less patience for the bad stuff or the “AI slop” (we are approaching 50% of podcasts being AI slop!). When every competitor can spin up a working prototype in a weekend, the feature list stops being the differentiator. What separates the products people actually use from the ones they try once is something harder to copy than a tech stack.

That abstract concept can be defined as taste. A genuine, well-developed point of view on what a user needs from a product. Not just what they asked for, but what they actually want, in what form, at what moment. That judgment can’t be prompted into existence. It has to be developed, and it has to sit somewhere in the organization.

When everyone can build, the question is no longer “can you ship it?” The question is “should you ship it, and in what form?”

Non-Deterministic Systems Break All the Old Rules

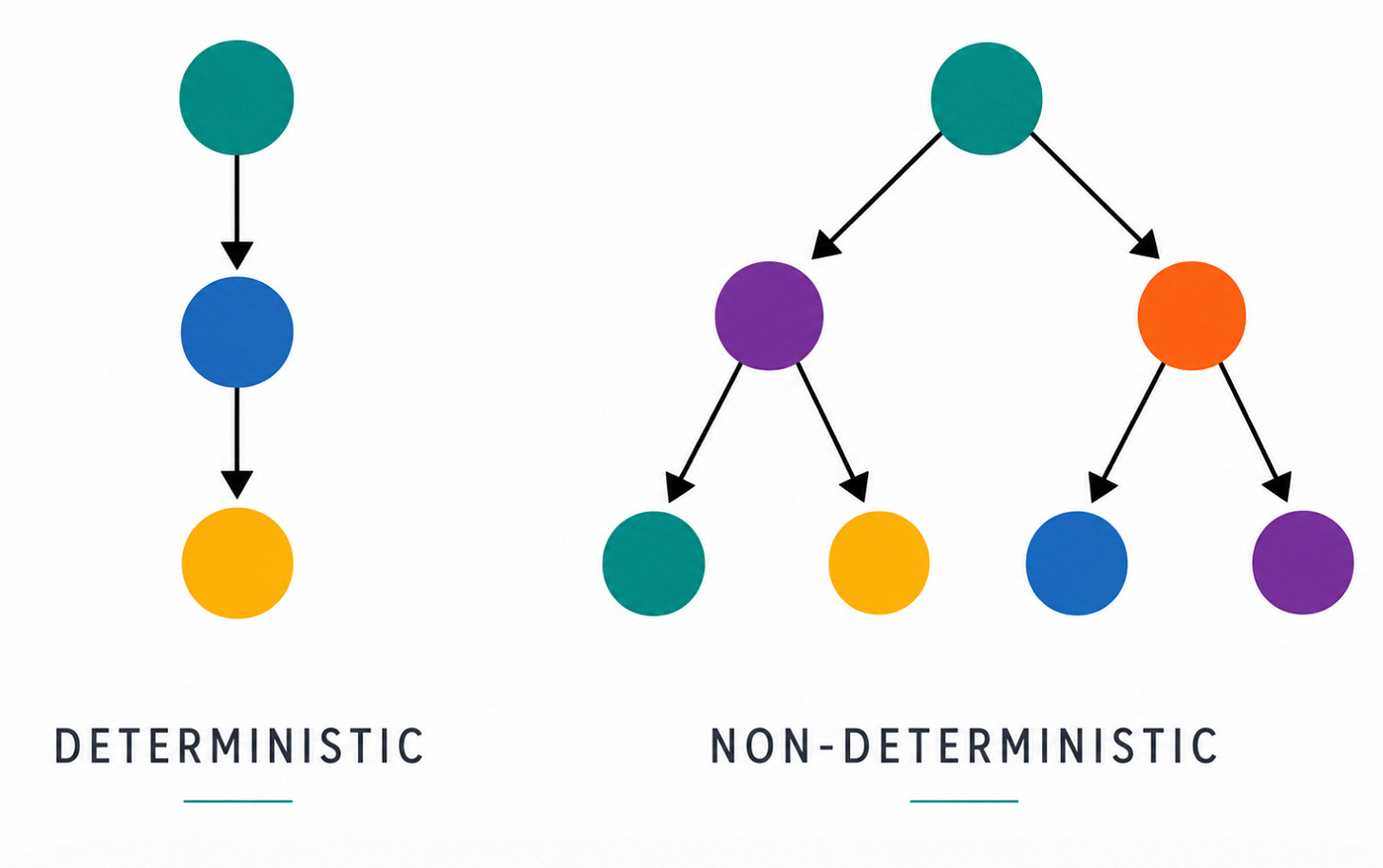

Traditional software has a simple design contract: user takes action → system produces predictable output. A button click returns a result. A form submission logs a record. A query returns a row from the database. Product designers and PMs have spent three decades optimizing within that contract. The output was fixed; the job was to make the path to it as clear and frictionless as possible.

AI systems break that contract entirely. The output can be almost anything. Every time a user queries an AI product, the model has to intuit what they mean — and the product has to make a prior decision about what the output should even look like. That decision doesn’t happen automatically. Someone has to make it, explicitly, in advance, for every meaningful interaction surface in the product. Linear’s CEO Karri Saarinen put it well in a piece published last month:

The hard part of design is understanding the problem well enough to know what and how something should exist at all… The risk is generating form and mistaking it for a solved problem

He was writing about AI design tools specifically, but the point generalizes. You can spin up a working interface in an afternoon now. That is not the same as having thought through whether it’s the right interface for the problem. AI has made the *execution* of product ideas dramatically cheaper. It has made the *decisions* about what to build, and how to present it, more consequential than ever. Non-determinism is not just a technical property of these systems — it is a product design challenge that has to be solved deliberately, or it will be solved accidentally, and usually badly.

New Product Questions Emerging

In many ways, AI products have something prior software never did: a personality. They don’t just respond, they interpret, infer, and develop a voice. That’s exciting, but it also introduces a new set of product design challenges. Here are a few questions that we’ve been thinking through as we’ve tested dozens of new AI products and worked with many of our companies to think through the right design choices:

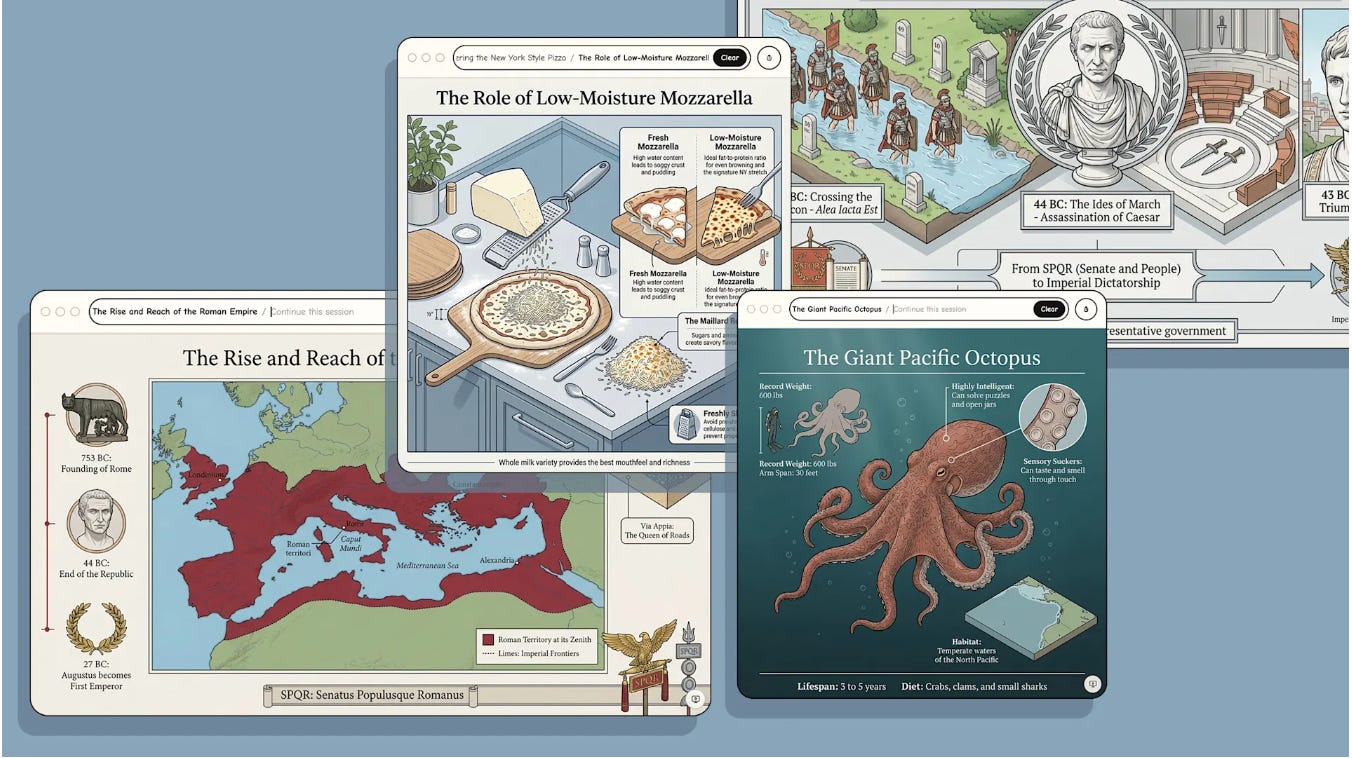

What is the output contract? For every user intent, what form should the output take — a summary, a table, a chart, an image, or an action taken on their behalf? If an action, do you show the user what’s happening or abstract it away? These have to be explicit decisions made in advance, not defaults that emerged from testing. If you can’t articulate why your product shows a chart instead of a paragraph, you haven’t made a product decision yet. Different products will arrive at very different answers and understanding what your customer wants, when, will be critical part of the moat.

Where does your product connect to the outside world, and why? MCP connectors, Slack integrations, email actions, etc. — these are product decisions, not just technical ones. When you let an agent act on a user’s behalf, what should it do? When should it ask first? Just because you CAN connect to everything doesn’t mean you’ve thought through which connections are right and what the experience around them should feel like. For example, some customers may prefer a WhatsApp connection vs. a Slack integration for a specific task and vs. versa. When do you decide what you should route to?

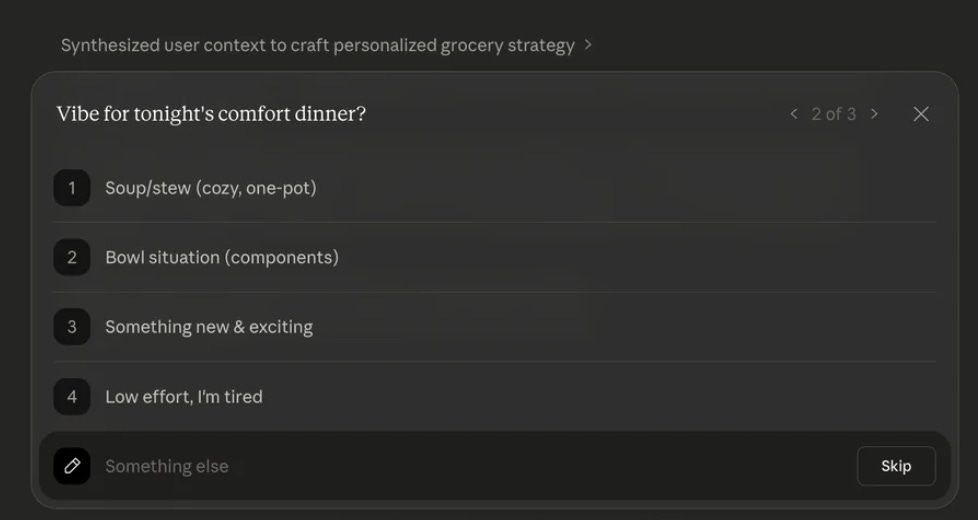

When do you ask vs. when do you answer? Follow-up questions are powerful when used well and deeply annoying when overused. Getting this wrong in either direction erodes trust fast. This isn’t a prompt engineering problem — it’s a product design problem, and someone has to have a point of view on it.

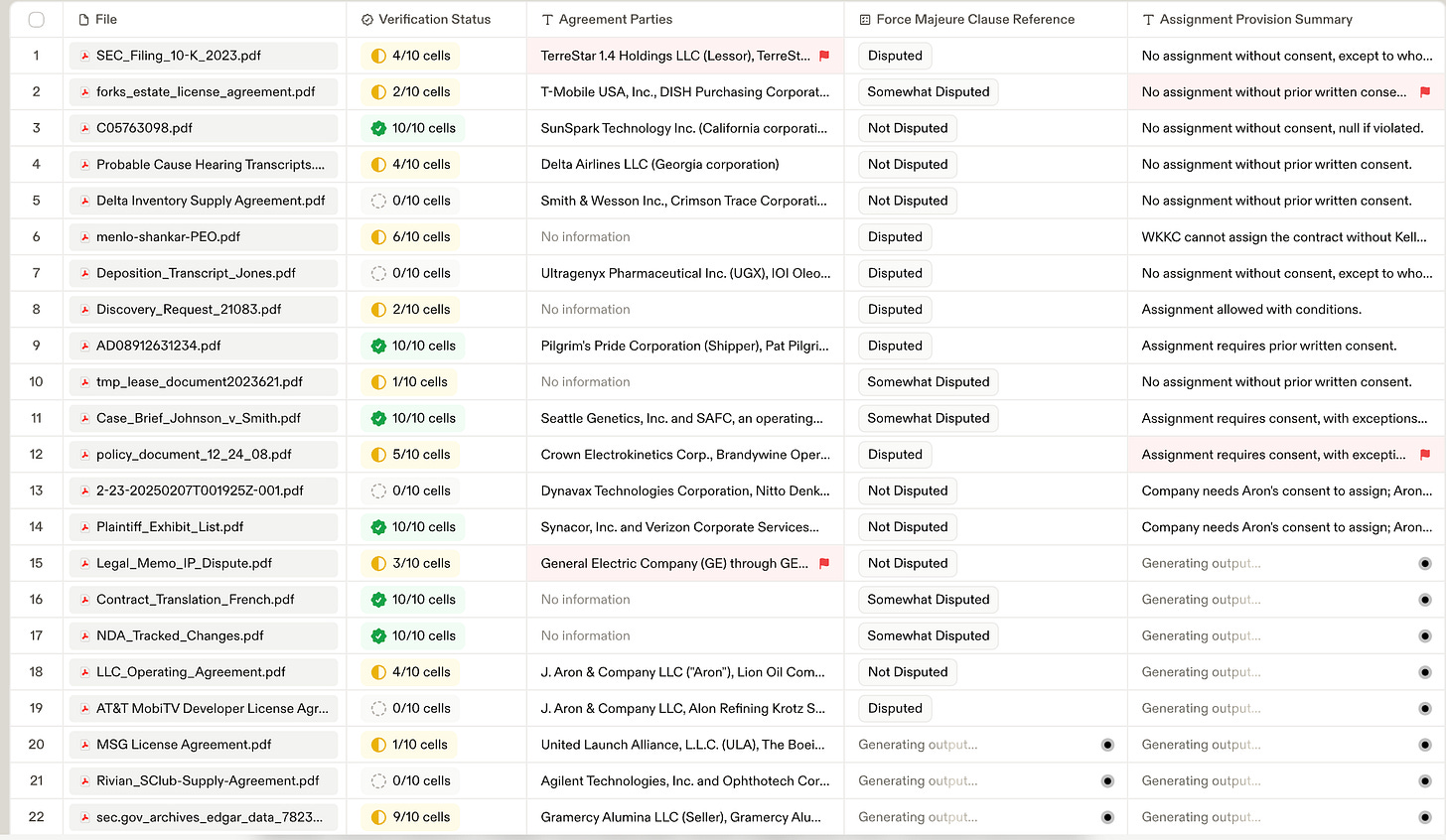

How do you know if the product is actually working? You can’t QA non-deterministic outputs the way you QA a form submission. Eval platforms like Braintrust are emerging to help teams define what “good” looks like and measure against it. Teams without an eval framework are flying blind and may not realize it until users start churning. New simulation and UI/UX software is emerging to try and test what users think about products too.

What are your guardrails? What does good output look like for your specific use case and user? That definition has to exist before guardrails can enforce it. Most AI product failures aren’t model failures — they’re product failures upstream. Nobody defined what “good” meant, so the model had no chance of reliably producing it.

What happens when something goes wrong? Most teams design recovery as a single fallback which is show an error message. That’s not recovery. A production-grade AI product needs explicit paths for retry, fallback, and escalation to human review. That also means thinking through permissions upfront — too broad and agents take actions outside the user’s intent; too narrow and they interrupt constantly. And it means specifying what happens at handoffs, because that’s where context goes missing and neither side notices. The teams that skip this layer ship prototypes. The teams that build it ship products.

A Few Companies Have Been Specifically Thoughtful About This:

Linear has been the clearest public articulator of what this looks like in practice. Rather than building a chat-first interface and bolting structure on top, they built a full functional application and then added AI into it. The AI works within the context the product creates, rather than replacing the product with a conversation. The result is that users always know what the agent did, can see it, and can intervene. Karri’s framing: the interface should never make you feel like something happened that you didn’t expect and couldn’t stop.

Harvey went deep on the question of what trust looks like in a high-stakes vertical. Legal professionals need to understand not just the output, but the reasoning behind it — the citations, the logic, the chain of inference. Harvey’s design team built transparency into the core of the product: every reasoning step is surfaced, every citation is auditable. Their principle: “every interface element is built to reflect clarity, accountability, and the rigor that legal work demands.” This is not a compliance feature. It’s the product decision that earns the user’s trust to actually act on what Harvey tells them.

Cursor has built its product around the single question of when to suggest versus when to ask — when to autocomplete versus when to pause. They’ve developed unusually strong intuitions about the human-AI handoff in the coding context, and those intuitions show up in how sticky the product is.

In Conclusion..

The risk in the vibe coding era is precisely what Karri described, mistaking output for design. You can ship a lot of things but that is not the same as shipping the right thing, in the right form, with the right guardrails around it.

The PM and product function matters more in an AI-first company. Someone has to own the output contract and define what “good” looks like before the evals can measure it. Someone has to decide whether a given interaction should route to Slack, stay in-app, ask a clarifying question, or just answer. Those decisions don’t make themselves, and the model won’t make them for you.

We continue to look for founders who have an unusually sharp intuition for user experience in AI-native contexts and a genuine, specific point of view on how their particular user wants to interact with AI output, what form it should take, and when the product should get out of the way. That instinct is increasingly the thing that separates the products people use every day from the ones they demo once and forget.